'Solves automatic numerical differentiation problems in one or more variables.'

Project description

Numdifftools

Numdifftools is a suite of tools written in _Python to solve automatic numerical differentiation problems in one or more variables. Finite differences are used in an adaptive manner, coupled with a Richardson extrapolation methodology to provide a maximally accurate result. The user can configure many options like; changing the order of the method or the extrapolation, even allowing the user to specify whether complex-step, central, forward or backward differences are used.

The methods provided are:

Derivative: Compute the derivatives of order 1 through 10 on any scalar function.

Gradient: Compute the gradient vector of a scalar function of one or more variables.

Jacobian: Compute the Jacobian matrix of a vector valued function of one or more variables.

Hessian: Compute the Hessian matrix of all 2nd partial derivatives of a scalar function of one or more variables.

Hessdiag: Compute only the diagonal elements of the Hessian matrix

All of these methods also produce error estimates on the result.

Numdifftools also provide an easy to use interface to derivatives calculated with in _AlgoPy. Algopy stands for Algorithmic Differentiation in Python. The purpose of AlgoPy is the evaluation of higher-order derivatives in the forward and reverse mode of Algorithmic Differentiation (AD) of functions that are implemented as Python programs.

Getting Started

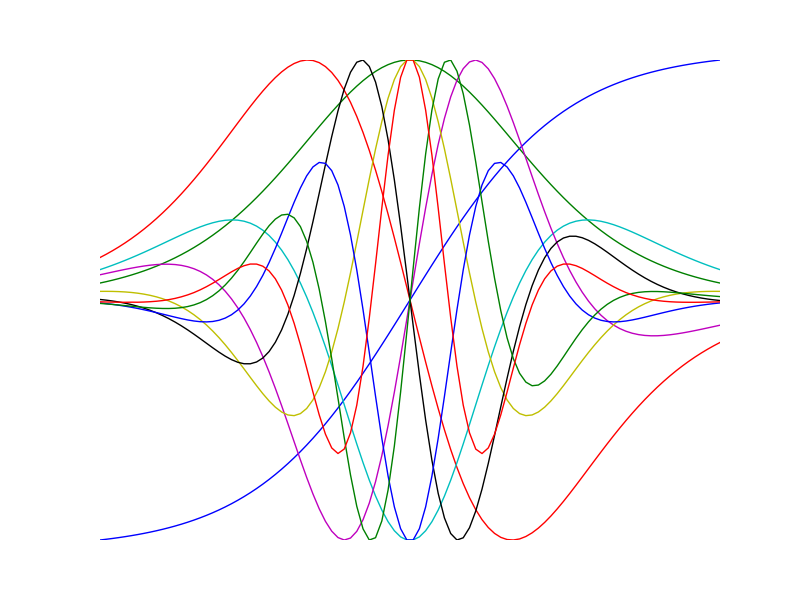

Visualize high order derivatives of the tanh function

>>> import numpy as np >>> import numdifftools as nd >>> import matplotlib.pyplot as plt >>> x = np.linspace(-2, 2, 100) >>> for i in range(10): ... df = Derivative(np.tanh, n=i) ... y = df(x) ... plt.plot(x, y/np.abs(y).max()) >>> plt.show()

Compute 1’st and 2’nd derivative of exp(x), at x == 1:

>>> fd = nd.Derivative(np.exp) # 1'st derivative >>> fdd = nd.Derivative(np.exp, n=2) # 2'nd derivative >>> np.allclose(fd(1), 2.7182818284590424) True >>> np.allclose(fdd(1), 2.7182818284590424) True

Nonlinear least squares:

>>> xdata = np.reshape(np.arange(0,1,0.1),(-1,1)) >>> ydata = 1+2*np.exp(0.75*xdata) >>> fun = lambda c: (c[0]+c[1]*np.exp(c[2]*xdata) - ydata)**2 >>> Jfun = nd.Jacobian(fun) >>> np.allclose(np.abs(Jfun([1,2,0.75])), 0) # should be numerically zero True

Compute gradient of sum(x**2):

>>> fun = lambda x: np.sum(x**2) >>> dfun = nd.Gradient(fun) >>> dfun([1,2,3]) array([ 2., 4., 6.])

Compute the same with the easy to use interface to AlgoPy:

>>> import numdifftools.nd_algopy as nda >>> import numpy as np >>> fd = nda.Derivative(np.exp) # 1'st derivative >>> fdd = nda.Derivative(np.exp, n=2) # 2'nd derivative >>> np.allclose(fd(1), 2.7182818284590424) True >>> np.allclose(fdd(1), 2.7182818284590424) True

Nonlinear least squares:

>>> xdata = np.reshape(np.arange(0,1,0.1),(-1,1)) >>> ydata = 1+2*np.exp(0.75*xdata) >>> fun = lambda c: (c[0]+c[1]*np.exp(c[2]*xdata) - ydata)**2 >>> Jfun = nda.Jacobian(fun, method='reverse') >>> np.allclose(np.abs(Jfun([1,2,0.75])), 0) # should be numerically zero True

Compute gradient of sum(x**2):

>>> fun = lambda x: np.sum(x**2) >>> dfun = nda.Gradient(fun) >>> dfun([1,2,3]) array([ 2., 4., 6.])

See also

scipy.misc.derivative

Documentation and code

Numdifftools works on Python 2.7+ and Python 3.0+.

Official releases available at: http://pypi.python.org/pypi/numdifftools

Official documentation available at: http://numdifftools.readthedocs.org/

Bleeding edge: https://github.com/pbrod/numdifftools.

Installation and upgrade:

with pip

$ pip install numdifftools

with conda

$ conda install -c https://conda.anaconda.org/pbrod numdifftools

with easy_install

$ easy_install numdifftools

or

$ easy_install upgrade numdifftools

to upgrade to the newest version

Unit tests

To test if the toolbox is working paste the following in an interactive python session:

import numdifftools as nd nd.test(coverage=True, doctests=True)

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Hashes for numdifftools-0.9.14-py2.py3-none-any.whl

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 0b5044f572dfad945253a7448a820505f4d37182bef5a31668911800eb1ad605 |

|

| MD5 | 934528eae7558d4b194eddcef8ff58cf |

|

| BLAKE2b-256 | 365a5f362a69c1d7b9506a3c2285fab751e8e309ec922efb60083c35908ce2e3 |