A multi-lingual approach to AllenNLP CoReference Resolution, along with a wrapper for spaCy.

Project description

Crosslingual Coreference

Coreference is amazing but the data required for training a model is very scarce. In our case, the available training for non-English languages also proved to be poorly annotated. Crosslingual Coreference, therefore, uses the assumption a trained model with English data and cross-lingual embeddings should work for languages with similar sentence structures.

Install

pip install crosslingual-coreference

Quickstart

from crosslingual_coreference import Predictor

text = (

"Do not forget about Momofuku Ando! He created instant noodles in Osaka. At"

" that location, Nissin was founded. Many students survived by eating these"

" noodles, but they don't even know him."

)

predictor = Predictor(

language="en_core_web_sm", device=-1, model_name="info_xlm"

)

print(predictor.predict(text)["resolved_text"])

# Output

#

# Do not forget about Momofuku Ando!

# Momofuku Ando created instant noodles in Osaka.

# At Osaka, Nissin was founded.

# Many students survived by eating instant noodles,

# but Many students don't even know Momofuku Ando.

Chunking/batching to resolve memory OOM errors

from crosslingual_coreference import Predictor

predictor = Predictor(

language="en_core_web_sm",

device=0,

model_name="info_xlm",

chunk_size=2500,

chunk_overlap=2,

)

Use spaCy pipeline

import spacy

import crosslingual_coreference

text = (

"Do not forget about Momofuku Ando! He created instant noodles in Osaka. At"

" that location, Nissin was founded. Many students survived by eating these"

" noodles, but they don't even know him."

)

nlp = spacy.load("en_core_web_sm")

nlp.add_pipe(

"xx_coref", config={"chunk_size": 2500, "chunk_overlap": 2, "device": 0}

)

doc = nlp(text)

print(doc._.coref_clusters)

# Output

#

# [[[4, 5], [7, 7], [27, 27], [36, 36]],

# [[12, 12], [15, 16]],

# [[9, 10], [27, 28]],

# [[22, 23], [31, 31]]]

print(doc._.resolved_text)

# Output

#

# Do not forget about Momofuku Ando!

# Momofuku Ando created instant noodles in Osaka.

# At Osaka, Nissin was founded.

# Many students survived by eating instant noodles,

# but Many students don't even know Momofuku Ando.

Available models

As of now, there are two models available "info_xlm", "xlm_roberta", which scored 77 and 74 on OntoNotes Release 5.0 English data, respectively.

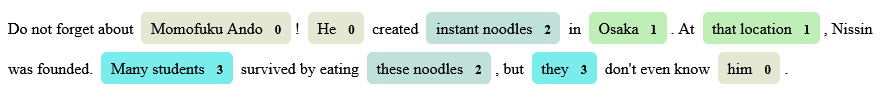

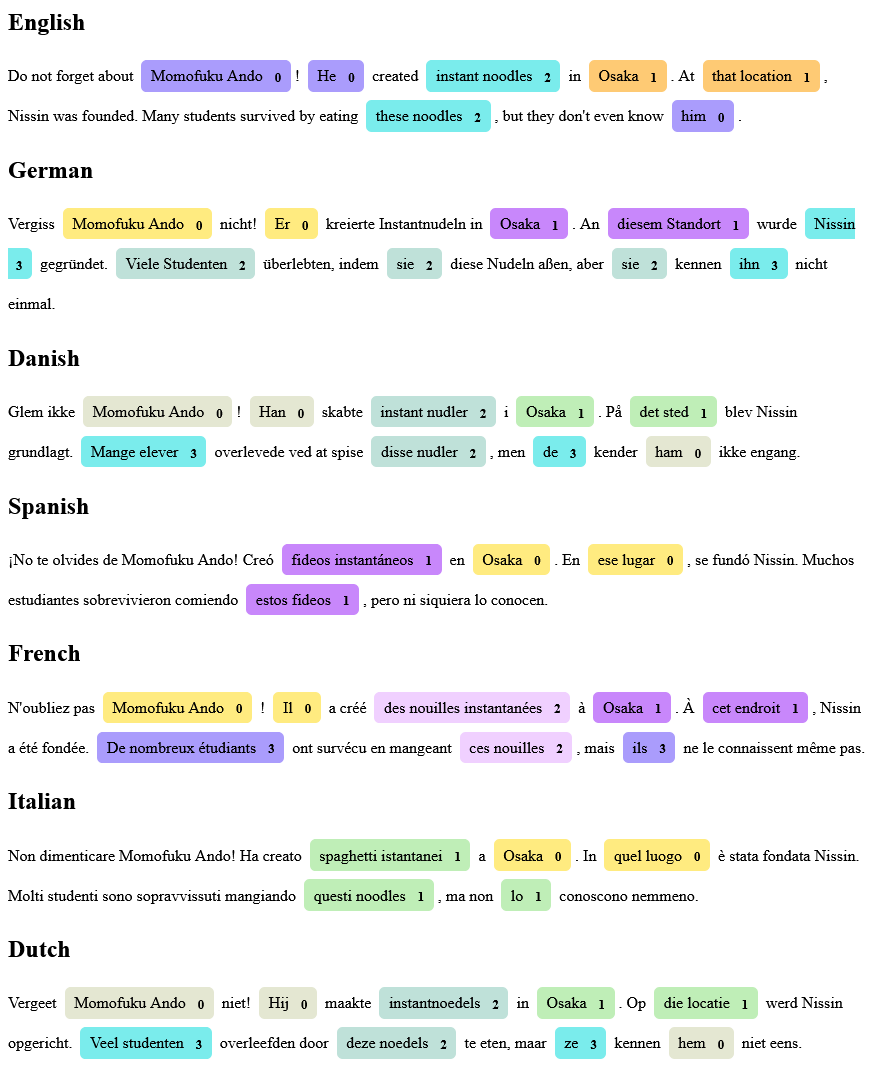

More Examples

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Close

Hashes for crosslingual-coreference-0.2.1.tar.gz

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 4f72fba0d5d251655d269359edab0b6f3b1dbd1140bb1d039ab8aead14e42c7a |

|

| MD5 | 9e0b1860309acb3079c0147e17787eb1 |

|

| BLAKE2b-256 | d513029a62f2a87e32eef711a8f6709d025d1069b547759a9d60aade8f2c320a |

Close

Hashes for crosslingual_coreference-0.2.1-py3-none-any.whl

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 1072d9393c987d9ae9135522040b73c225c64fcdf7cc05c3814be26b9c2b0159 |

|

| MD5 | c39d5648577e940d1816e8c5658e0329 |

|

| BLAKE2b-256 | 9b0297702027ab38283d18c062c0f662355fe801c9909464f42540708c169435 |