optimizer & lr scheduler collections in PyTorch

Project description

Build |

|

Quality |

|

Package |

|

Status |

|

License |

Getting Started

For more, see the documentation.

Most optimizers are under MIT or Apache 2.0 license, but a few optimizers like Fromage, Nero have BY-NC-SA 4.0 license, which is non-commercial. So, please double-check the license before using it at your work.

Installation

$ pip3 install -U pytorch-optimizer

If there’s a version issue when installing the package, try with –no-deps option.

$ pip3 install -U --no-deps pytorch-optimizer

Simple Usage

from pytorch_optimizer import AdamP model = YourModel() optimizer = AdamP(model.parameters()) # or you can use optimizer loader, simply passing a name of the optimizer. from pytorch_optimizer import load_optimizer model = YourModel() opt = load_optimizer(optimizer='adamp') optimizer = opt(model.parameters())

Also, you can load the optimizer via torch.hub

import torch

model = YourModel()

opt = torch.hub.load('kozistr/pytorch_optimizer', 'adamp')

optimizer = opt(model.parameters())

If you want to build the optimizer with parameters & configs, there’s create_optimizer() API.

from pytorch_optimizer import create_optimizer

optimizer = create_optimizer(

model,

'adamp',

lr=1e-3,

weight_decay=1e-3,

use_gc=True,

use_lookahead=True,

)

Supported Optimizers

You can check the supported optimizers with below code.

from pytorch_optimizer import get_supported_optimizers supported_optimizers = get_supported_optimizers()

Optimizer |

Description |

Official Code |

Paper |

Citation |

|---|---|---|---|---|

AdaBelief |

Adapting Step-sizes by the Belief in Observed Gradients |

|||

AdaBound |

Adaptive Gradient Methods with Dynamic Bound of Learning Rate |

|||

AdaHessian |

An Adaptive Second Order Optimizer for Machine Learning |

|||

AdamD |

Improved bias-correction in Adam |

|||

AdamP |

Slowing Down the Slowdown for Momentum Optimizers on Scale-invariant Weights |

|||

diffGrad |

An Optimization Method for Convolutional Neural Networks |

|||

MADGRAD |

A Momentumized, Adaptive, Dual Averaged Gradient Method for Stochastic |

|||

RAdam |

On the Variance of the Adaptive Learning Rate and Beyond |

|||

Ranger |

a synergistic optimizer combining RAdam and LookAhead, and now GC in one optimizer |

|||

Ranger21 |

a synergistic deep learning optimizer |

|||

Lamb |

Large Batch Optimization for Deep Learning |

|||

Shampoo |

Preconditioned Stochastic Tensor Optimization |

|||

Nero |

Learning by Turning: Neural Architecture Aware Optimisation |

|||

Adan |

Adaptive Nesterov Momentum Algorithm for Faster Optimizing Deep Models |

|||

Adai |

Disentangling the Effects of Adaptive Learning Rate and Momentum |

|||

SAM |

Sharpness-Aware Minimization |

|||

ASAM |

Adaptive Sharpness-Aware Minimization |

|||

GSAM |

Surrogate Gap Guided Sharpness-Aware Minimization |

|||

D-Adaptation |

Learning-Rate-Free Learning by D-Adaptation |

|||

AdaFactor |

Adaptive Learning Rates with Sublinear Memory Cost |

|||

Apollo |

An Adaptive Parameter-wise Diagonal Quasi-Newton Method for Nonconvex Stochastic Optimization |

|||

NovoGrad |

Stochastic Gradient Methods with Layer-wise Adaptive Moments for Training of Deep Networks |

|||

Lion |

Symbolic Discovery of Optimization Algorithms |

|||

Ali-G |

Adaptive Learning Rates for Interpolation with Gradients |

|||

SM3 |

Memory-Efficient Adaptive Optimization |

|||

AdaNorm |

Adaptive Gradient Norm Correction based Optimizer for CNNs |

|||

RotoGrad |

Gradient Homogenization in Multitask Learning |

|||

A2Grad |

Optimal Adaptive and Accelerated Stochastic Gradient Descent |

|||

AccSGD |

Accelerating Stochastic Gradient Descent For Least Squares Regression |

|||

SGDW |

Decoupled Weight Decay Regularization |

|||

ASGD |

Adaptive Gradient Descent without Descent |

|||

Yogi |

Adaptive Methods for Nonconvex Optimization |

|||

SWATS |

Improving Generalization Performance by Switching from Adam to SGD |

|||

Fromage |

On the distance between two neural networks and the stability of learning |

|||

MSVAG |

Dissecting Adam: The Sign, Magnitude and Variance of Stochastic Gradients |

|||

AdaMod |

An Adaptive and Momental Bound Method for Stochastic Learning |

|||

AggMo |

Aggregated Momentum: Stability Through Passive Damping |

|||

QHAdam |

Quasi-hyperbolic momentum and Adam for deep learning |

|||

PID |

A PID Controller Approach for Stochastic Optimization of Deep Networks |

|||

Gravity |

a Kinematic Approach on Optimization in Deep Learning |

|||

AdaSmooth |

An Adaptive Learning Rate Method based on Effective Ratio |

|||

SRMM |

Stochastic regularized majorization-minimization with weakly convex and multi-convex surrogates |

|||

AvaGrad |

Domain-independent Dominance of Adaptive Methods |

|||

PCGrad |

Gradient Surgery for Multi-Task Learning |

|||

AMSGrad |

On the Convergence of Adam and Beyond |

|||

Lookahead |

k steps forward, 1 step back |

|||

PNM |

Manipulating Stochastic Gradient Noise to Improve Generalization |

|||

GC |

Gradient Centralization |

|||

AGC |

Adaptive Gradient Clipping |

|||

Stable WD |

Understanding and Scheduling Weight Decay |

|||

Softplus T |

Calibrating the Adaptive Learning Rate to Improve Convergence of ADAM |

|||

Un-tuned w/u |

On the adequacy of untuned warmup for adaptive optimization |

|||

Norm Loss |

An efficient yet effective regularization method for deep neural networks |

|||

AdaShift |

Decorrelation and Convergence of Adaptive Learning Rate Methods |

|||

AdaDelta |

An Adaptive Learning Rate Method |

|||

Amos |

An Adam-style Optimizer with Adaptive Weight Decay towards Model-Oriented Scale |

|||

SignSGD |

Compressed Optimisation for Non-Convex Problems |

|||

AdaHessian |

An Adaptive Second Order Optimizer for Machine Learning |

|||

Sophia |

A Scalable Stochastic Second-order Optimizer for Language Model Pre-training |

|||

Prodigy |

An Expeditiously Adaptive Parameter-Free Learner |

Supported LR Scheduler

You can check the supported learning rate schedulers with below code.

from pytorch_optimizer import get_supported_lr_schedulers supported_lr_schedulers = get_supported_lr_schedulers()

LR Scheduler |

Description |

Official Code |

Paper |

Citation |

|---|---|---|---|---|

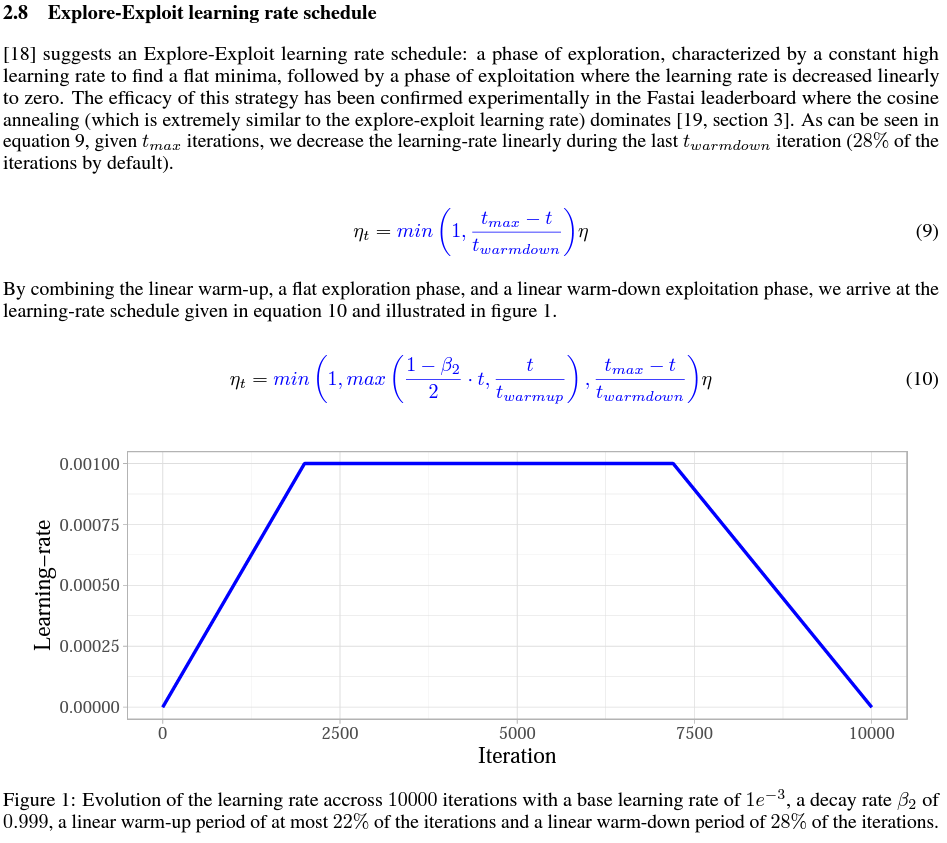

Explore-Exploit |

Wide-minima Density Hypothesis and the Explore-Exploit Learning Rate Schedule |

|||

Chebyshev |

Acceleration via Fractal Learning Rate Schedules |

Useful Resources

Several optimization ideas to regularize & stabilize the training. Most of the ideas are applied in Ranger21 optimizer.

Also, most of the captures are taken from Ranger21 paper.

Adaptive Gradient Clipping

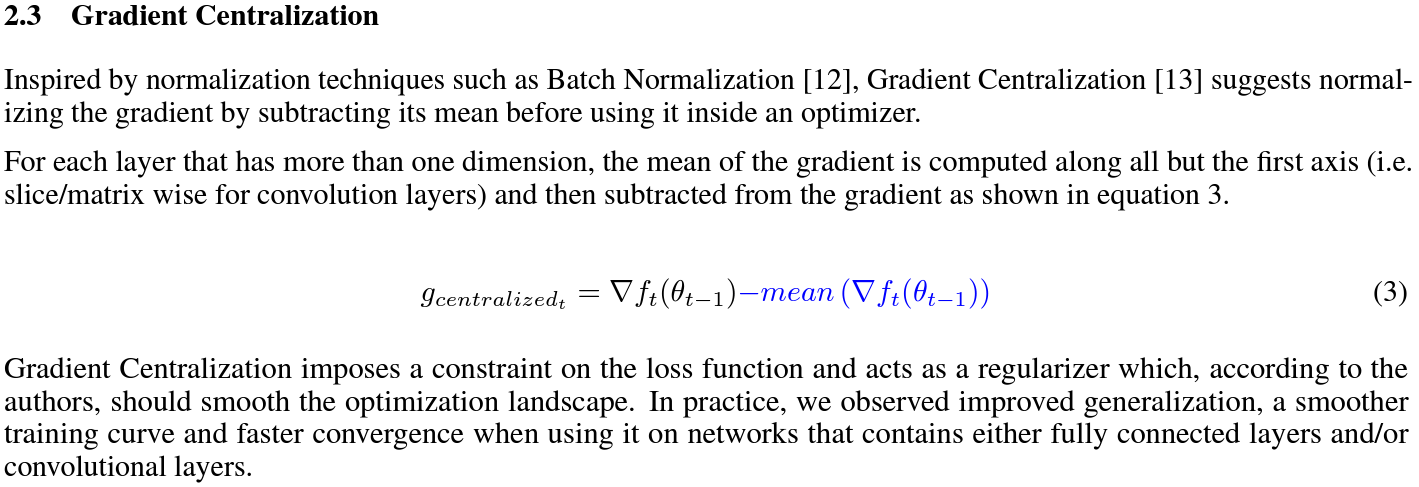

Gradient Centralization

|

Gradient Centralization (GC) operates directly on gradients by centralizing the gradient to have zero mean.

Softplus Transformation

By running the final variance denom through the softplus function, it lifts extremely tiny values to keep them viable.

paper : arXiv

Gradient Normalization

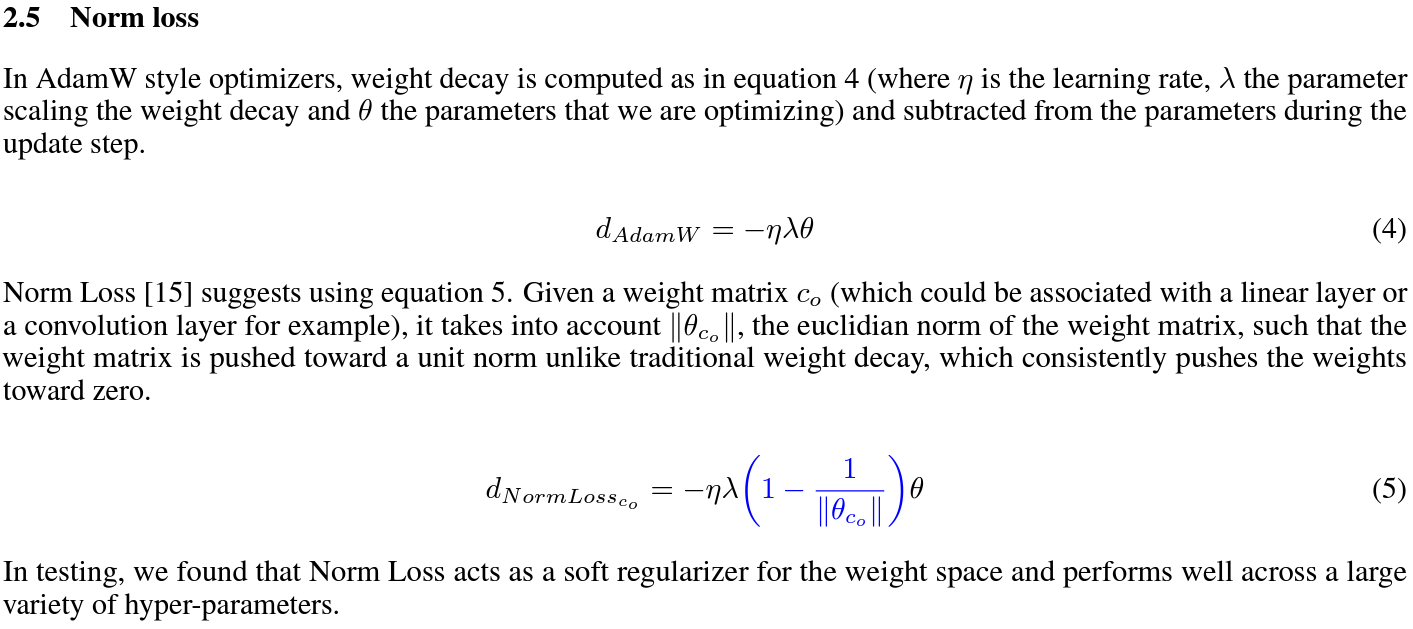

Norm Loss

|

paper : arXiv

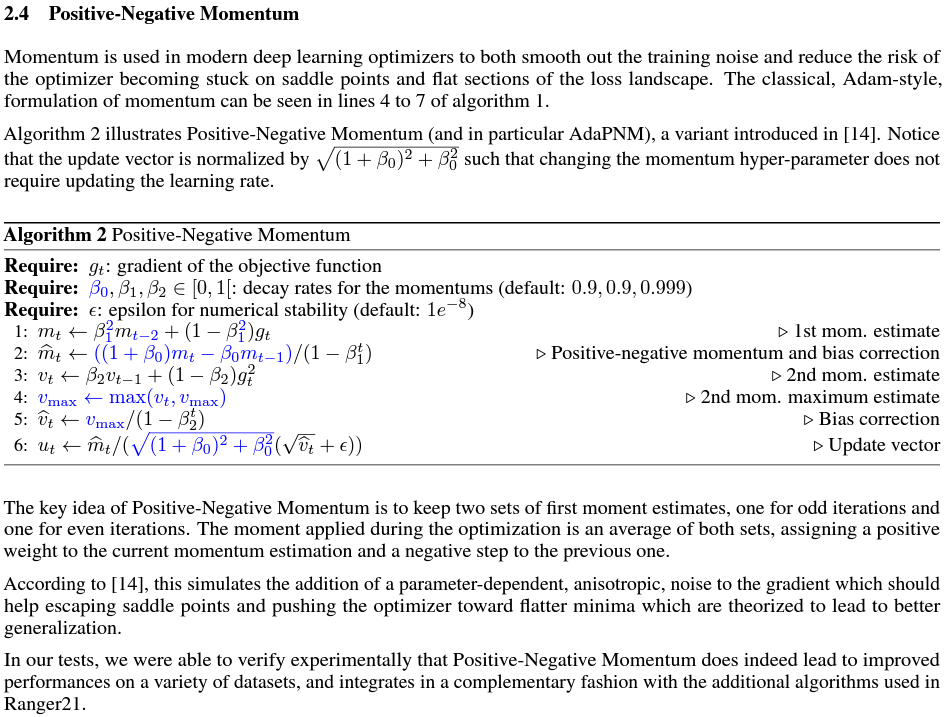

Positive-Negative Momentum

|

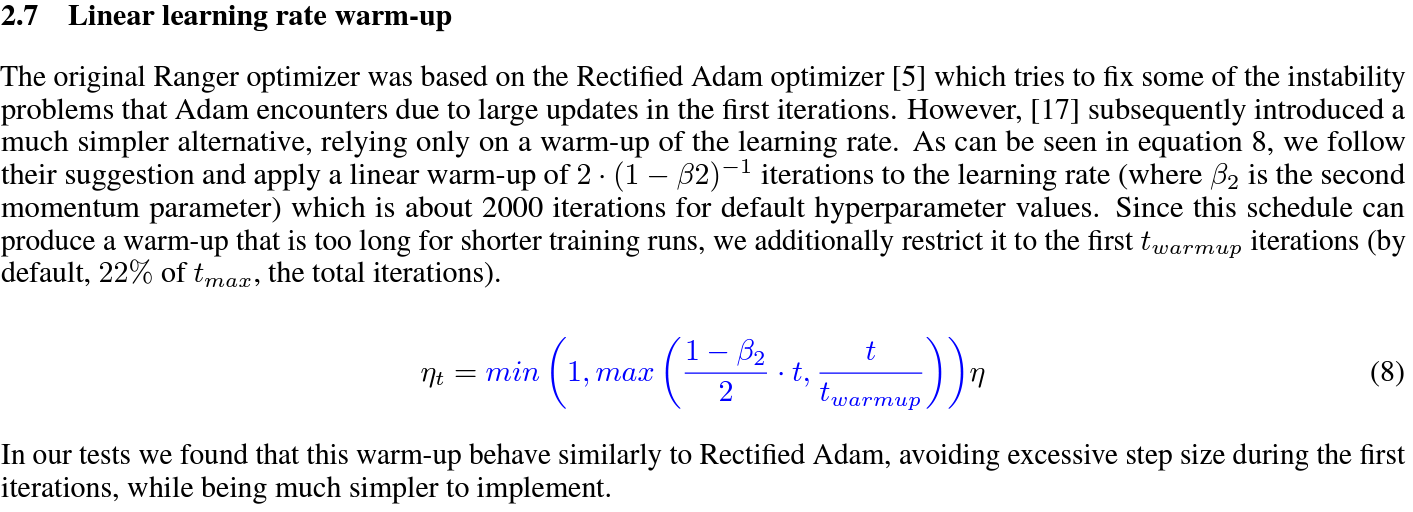

Linear learning rate warmup

|

paper : arXiv

Stable weight decay

|

Explore-exploit learning rate schedule

|

Lookahead

Chebyshev learning rate schedule

Acceleration via Fractal Learning Rate Schedules.

(Adaptive) Sharpness-Aware Minimization

On the Convergence of Adam and Beyond

Improved bias-correction in Adam

Adaptive Gradient Norm Correction

Citation

Please cite original authors of optimization algorithms. If you use this software, please cite it as below. Or you can get from “cite this repository” button.

@software{Kim_pytorch_optimizer_Optimizer_and_2022,

author = {Kim, Hyeongchan},

month = {1},

title = {{pytorch_optimizer: optimizer and lr scheduler collections in PyTorch}},

version = {1.0.0},

year = {2022}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Hashes for pytorch_optimizer-2.10.1-py3-none-any.whl

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 7e68cfca92576cf49c836d83a5c5317cd41f532601d35d18c8543539dc4cb06d |

|

| MD5 | 407c590ef90f405567ba2bf789805de3 |

|

| BLAKE2b-256 | bef5b7b1c3ca57d2914ea63e28e7cabdd418d839fa3339f30dcbce84f2d846bf |