Spectral Clustering

Project description

Spectral Clustering

Overview

This is a Python re-implementation of the spectral clustering algorithm in the paper Speaker Diarization with LSTM.

Disclaimer

This is not the original implementation used by the paper.

Specifically, in this implementation, we use the K-Means from scikit-learn, which does NOT support customized distance measure like cosine distance.

Dependencies

- numpy

- scipy

- scikit-learn

Installation

Install the package by:

pip3 install spectralcluster

or

python3 -m pip install spectralcluster

Tutorial

Simply use the predict() method of class SpectralClusterer to perform

spectral clustering:

from spectralcluster import SpectralClusterer

clusterer = SpectralClusterer(

min_clusters=2,

max_clusters=100,

p_percentile=0.95,

gaussian_blur_sigma=1)

labels = clusterer.predict(X)

The input X is a numpy array of shape (n_samples, n_features),

and the returned labels is a numpy array of shape (n_samples,).

For the complete list of parameters of the clusterer, see

spectralcluster/spectral_clusterer.py.

Citations

Our paper is cited as:

@inproceedings{wang2018speaker,

title={Speaker diarization with lstm},

author={Wang, Quan and Downey, Carlton and Wan, Li and Mansfield, Philip Andrew and Moreno, Ignacio Lopz},

booktitle={2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)},

pages={5239--5243},

year={2018},

organization={IEEE}

}

FAQs

Laplacian matrix

Question: Why are you performing eigen-decomposition directly on the similarity matrix instead of its Laplacian matrix? (source)

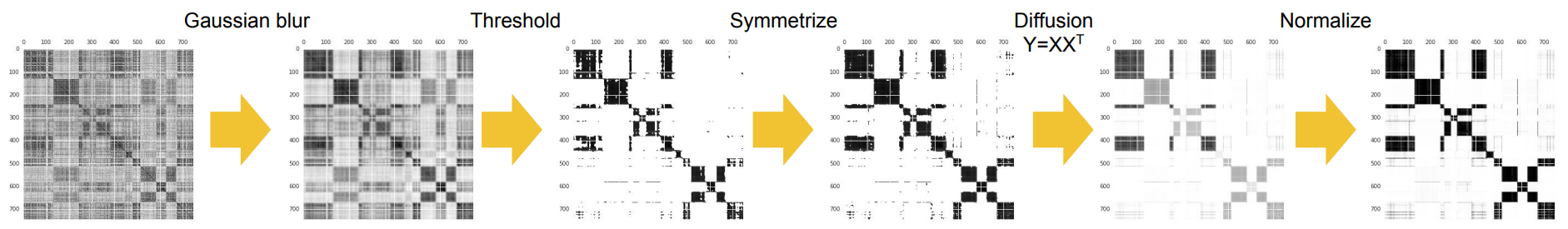

Answer: No, we are not performing eigen-decomposition directly on the similarity matrix. In the sequence of refinement operations, the first operation is CropDiagonal, which replaces each diagonal element of the similarity matrix by the max non-diagonal value of the row. After this operation, the matrix has similar properties to a standard Laplacian matrix.

Question: Why don't you just use the standard Laplacian matrix?

Answer: Our Laplacian matrix is less sensitive (thus more robust) to the Gaussian blur operation.

Cosine vs. Euclidean distance

Question: Your paper says the K-Means should be based on Cosine distance, but this repository is using Euclidean distance. Do you have a Cosine distance version?

Answer: You can find a variant of this repository using Cosine distance for K-means instead of Euclidean distance here: FlorianKrey/DNC

Misc

Our new speaker diarization systems are now fully supervised, powered by uis-rnn. Check this Google AI Blog.

To learn more about speaker diarization, here is a curated list of resources: awesome-diarization.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Hashes for spectralcluster-0.1.0-py3-none-any.whl

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 | 263cce6d833b4958a07c9d2fcdbf44045c3ac5db25244f9d4dbe96bf367be1c9 |

|

| MD5 | 43701b5dc1281487a6efc9381531515d |

|

| BLAKE2b-256 | 24a712c567ed32165ccaa0a40bd55384958751a2049891c109fb9a1d3996e7a0 |